Difference between revisions of "How to use OpenCV CUDA Streams"

m |

|||

| (15 intermediate revisions by 2 users not shown) | |||

| Line 1: | Line 1: | ||

| − | + | <seo title="OpenCV CUDA Streams | OpenCV CUDA | RidgeRun Developer" titlemode="replace" keywords="OpenCV CUDA Streams, OpenCV, CUDA, OpenCV CUDA, CUDA Streams, NVIDIA Nsight, CUDA Streams pipelining, OpenCV CUDA Streams example, NVIDIA Nsight program, Nsight program" description="This wiki page from RidgeRun is about OpenCV CUDA Streams example, profiling with NVIDIA Nsight and understanding CUDA Streams pipelining"></seo> | |

| − | == Introduction == | + | <table> |

| + | <tr> | ||

| + | <td><div class="clear; float:right">__TOC__</div></td> | ||

| + | <td> | ||

| + | {{NVIDIA Preferred Partner logo}} | ||

| + | <td> | ||

| + | <center> | ||

| + | {{ContactUs Button}} | ||

| + | </center> | ||

| + | </tr> | ||

| + | </table> | ||

| + | |||

| + | == Introduction to OpenCV CUDA Streams == | ||

== Dependencies == | == Dependencies == | ||

| Line 10: | Line 22: | ||

* NVIDIA Nsight | * NVIDIA Nsight | ||

| − | == | + | == OpenCV CUDA Streams example == |

| − | The following example uses a sample input image | + | The following example uses a sample input image and resizes it in four different streams. |

Compile the example with: | Compile the example with: | ||

| Line 20: | Line 32: | ||

</source> | </source> | ||

| − | + | '''testStreams.cpp''' | |

| − | testStreams.cpp | ||

<source lang="C++"> | <source lang="C++"> | ||

| Line 141: | Line 152: | ||

== Profiling with NVIDIA Nsight == | == Profiling with NVIDIA Nsight == | ||

| − | + | ===Profile the testStreams program with the NVIDIA Nsight program=== | |

| + | |||

| + | *Add the command line and working directory | ||

| + | *Select Collect CUDA trace | ||

| + | *Select Collect GPU context switch trace | ||

| + | |||

| + | As seen in the following image: | ||

| + | <br> | ||

| + | [[File:CudaStreamsStep1.png|800px|frameless|center]] | ||

| + | <br> | ||

| + | Click start to init the profiling process. Manual stop is also needed when profiling has ended. | ||

| + | |||

| + | === Analyze the output=== | ||

| + | |||

| + | You will get an output similar to the following: | ||

| + | <br> | ||

| + | [[File:CUDAStreamsStep2.png|800px|frameless|center]] | ||

| + | |||

| + | ===Information=== | ||

| + | |||

| + | Each color represents the operation that is being executed at some point | ||

| + | |||

| + | *<span style="color:#00FF00">Green box: </span> Memory copy operations from Host to Device | ||

| + | *<span style="color:#0000FF">Blue box: </span> Execution of the kernel in the Device | ||

| + | *<span style="color:#FF0000">Red box: </span> Memory copy operations from Device to Host | ||

| + | <br> | ||

| + | [[File:ExplainCUDAStreams.png|900px|frameless|center]] | ||

| + | <br> | ||

| + | |||

| + | === Understanding CUDA Streams pipelining=== | ||

| + | |||

| + | CUDA Streams help in creating an execution pipeline therefore when a Host to Device operation is being performed then another kernel can be executed, as well as for the Device to Host operations. In the following image, the pipeline can be analyzed. | ||

| + | |||

| + | Each iteration of the "for" clause in the example is represented in the image as a box. | ||

| + | |||

| + | *<span style="color:#0000FF">Blue box: </span> Iteration 1 | ||

| + | *<span style="color:#FF0000">Red box: </span> Iteration 2 | ||

| + | *<span style="color:#00FF00">Green box: </span> Iteration 3 | ||

| + | |||

| + | Inside each iteration, 4 images are computed with a pipelined structure. | ||

| + | <br> | ||

| + | <br> | ||

| + | [[File:CUDAStreamsStep3.png|900px|frameless|center]] | ||

| + | |||

| + | |||

| + | {{ContactUs}} | ||

| + | |||

| − | [[Category:HowTo]][[Category:OpenCV]][[Category:CUDA]] | + | [[Category:HowTo]][[Category:OpenCV]][[Category:CUDA]][[Category:GStreamer]][[Category:Jetson]][[Category:JetsonNano]][[Category:JetsonTX2]][[Category:NVIDIA Xavier]][[Category:JetsonXavierNX]][[Category:IMX6]][[Category:IMX8]] |

Latest revision as of 12:05, 23 August 2022

|

|

Introduction to OpenCV CUDA Streams

Dependencies

- G++ Compiler

- CUDA

- OpenCV >= 4.1 (compiled with CUDA Support)

- NVIDIA Nsight

OpenCV CUDA Streams example

The following example uses a sample input image and resizes it in four different streams.

Compile the example with:

g++ testStreams.cpp -o testStreams $(pkg-config --libs --cflags opencv4)

testStreams.cpp

#include <opencv2/opencv.hpp>

#include <opencv2/core/core.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/core/cuda.hpp>

#include <vector>

#include <memory>

#include <iostream>

std::shared_ptr<std::vector<cv::Mat>> computeArray(std::shared_ptr<std::vector< cv::cuda::HostMem >> srcMemArray,

std::shared_ptr<std::vector< cv::cuda::HostMem >> dstMemArray,

std::shared_ptr<std::vector< cv::cuda::GpuMat >> gpuSrcArray,

std::shared_ptr<std::vector< cv::cuda::GpuMat >> gpuDstArray,

std::shared_ptr<std::vector< cv::Mat >> outArray,

std::shared_ptr<std::vector< cv::cuda::Stream >> streamsArray){

//Define test target size

cv::Size rSize(256, 256);

//Compute for each input image with async calls

for(int i=0; i<4; i++){

//Upload Input Pinned Memory to GPU Mat

(*gpuSrcArray)[i].upload((*srcMemArray)[i], (*streamsArray)[i]);

//Use the CUDA Kernel Method

cv::cuda::resize((*gpuSrcArray)[i], (*gpuDstArray)[i], rSize, 0, 0, cv::INTER_AREA, (*streamsArray)[i]);

//Download result to Output Pinned Memory

(*gpuDstArray)[i].download((*dstMemArray)[i],(*streamsArray)[i]);

//Obtain data back to CPU Memory

(*outArray)[i] = (*dstMemArray)[i].createMatHeader();

}

//All previous calls are non-blocking therefore

//wait for each stream completetion

(*streamsArray)[0].waitForCompletion();

(*streamsArray)[1].waitForCompletion();

(*streamsArray)[2].waitForCompletion();

(*streamsArray)[3].waitForCompletion();

return outArray;

}

int main (int argc, char* argv[]){

//Load test image

cv::Mat srcHostImage = cv::imread("1080.jpg");

//Create CUDA Streams Array

std::shared_ptr<std::vector<cv::cuda::Stream>> streamsArray = std::make_shared<std::vector<cv::cuda::Stream>>();

cv::cuda::Stream streamA, streamB, streamC, streamD;

streamsArray->push_back(streamA);

streamsArray->push_back(streamB);

streamsArray->push_back(streamC);

streamsArray->push_back(streamD);

//Create Pinned Memory (PAGE_LOCKED) arrays

std::shared_ptr<std::vector<cv::cuda::HostMem >> srcMemArray = std::make_shared<std::vector<cv::cuda::HostMem >>();

std::shared_ptr<std::vector<cv::cuda::HostMem >> dstMemArray = std::make_shared<std::vector<cv::cuda::HostMem >>();

//Create GpuMat arrays to use them on OpenCV CUDA Methods

std::shared_ptr<std::vector< cv::cuda::GpuMat >> gpuSrcArray = std::make_shared<std::vector<cv::cuda::GpuMat>>();

std::shared_ptr<std::vector< cv::cuda::GpuMat >> gpuDstArray = std::make_shared<std::vector<cv::cuda::GpuMat>>();

//Create Output array for CPU Mat

std::shared_ptr<std::vector< cv::Mat >> outArray = std::make_shared<std::vector<cv::Mat>>();

for(int i=0; i<4; i++){

//Define GPU Mats

cv::cuda::GpuMat srcMat;

cv::cuda::GpuMat dstMat;

//Define CPU Mat

cv::Mat outMat;

//Initialize the Pinned Memory with input image

cv::cuda::HostMem srcHostMem = cv::cuda::HostMem(srcHostImage, cv::cuda::HostMem::PAGE_LOCKED);

//Initialize the output Pinned Memory with reference to output Mat

cv::cuda::HostMem srcDstMem = cv::cuda::HostMem(outMat, cv::cuda::HostMem::PAGE_LOCKED);

//Add elements to each array.

srcMemArray->push_back(srcHostMem);

dstMemArray->push_back(srcDstMem);

gpuSrcArray->push_back(srcMat);

gpuDstArray->push_back(dstMat);

outArray->push_back(outMat);

}

//Test the process 20 times

for(int i=0; i<20; i++){

try{

std::shared_ptr<std::vector<cv::Mat>> result = std::make_shared<std::vector<cv::Mat>>();

result = computeArray(srcMemArray, dstMemArray, gpuSrcArray, gpuDstArray, outArray, streamsArray);

//Optional to show the results

//cv::imshow("Result", (*result)[0]);

//cv::waitKey(0);

}

catch(const cv::Exception& ex){

std::cout << "Error: " << ex.what() << std::endl;

}

}

return 0;

}

Profiling with NVIDIA Nsight

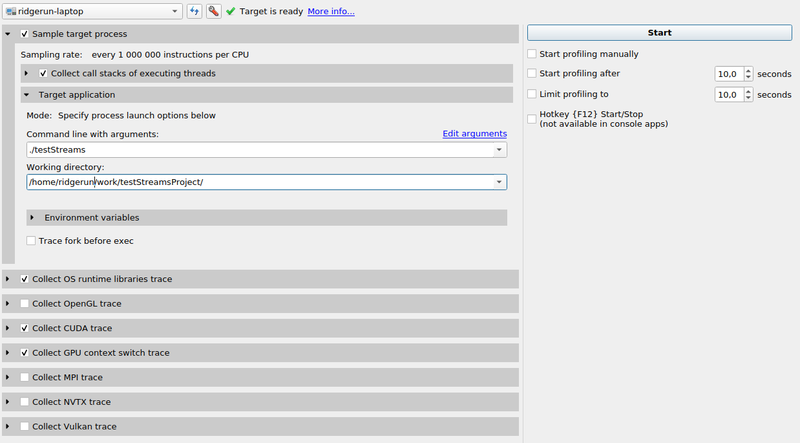

Profile the testStreams program with the NVIDIA Nsight program

- Add the command line and working directory

- Select Collect CUDA trace

- Select Collect GPU context switch trace

As seen in the following image:

Click start to init the profiling process. Manual stop is also needed when profiling has ended.

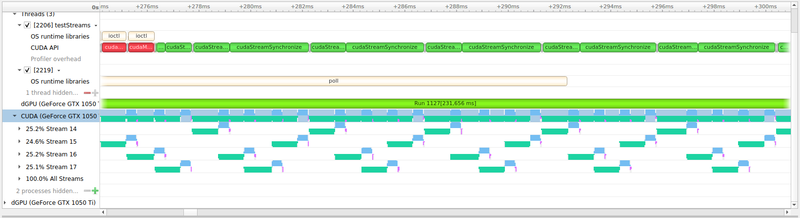

Analyze the output

You will get an output similar to the following:

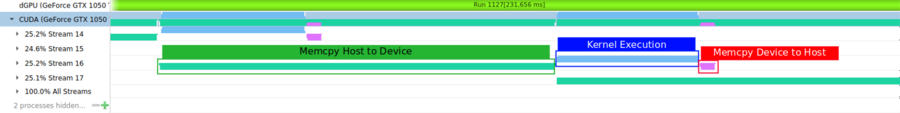

Information

Each color represents the operation that is being executed at some point

- Green box: Memory copy operations from Host to Device

- Blue box: Execution of the kernel in the Device

- Red box: Memory copy operations from Device to Host

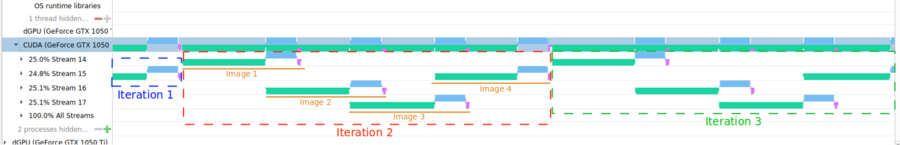

Understanding CUDA Streams pipelining

CUDA Streams help in creating an execution pipeline therefore when a Host to Device operation is being performed then another kernel can be executed, as well as for the Device to Host operations. In the following image, the pipeline can be analyzed.

Each iteration of the "for" clause in the example is represented in the image as a box.

- Blue box: Iteration 1

- Red box: Iteration 2

- Green box: Iteration 3

Inside each iteration, 4 images are computed with a pipelined structure.

|

RidgeRun Resources | |||||||||||||||||||||||||||||||||||||||||||||||||||||||

| |||||||||||||||||||||||||||||||||||||||||||||||||||||||

|

|||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Visit our Main Website for the RidgeRun Products and Online Store. RidgeRun Engineering informations are available in RidgeRun Professional Services, RidgeRun Subscription Model and Client Engagement Process wiki pages. Please email to support@ridgerun.com for technical questions and contactus@ridgerun.com for other queries. Contact details for sponsoring the RidgeRun GStreamer projects are available in Sponsor Projects page. |

|