Difference between revisions of "GstInference/Supported backends/NCSDK"

m |

|||

| Line 6: | Line 6: | ||

--> | --> | ||

| − | The NCSDK Intel® Movidius™ Neural Compute SDK (Intel® Movidius™ NCSDK) enables deployment of deep neural networks on compatible devices such as the Intel® Movidius™ Neural Compute Stick. The NCSDK includes a set of software tools to compile, profile, and validate DNNs as well as APIs on C/C++ and Python for application development. | + | The NCSDK Intel® Movidius™ Neural Compute SDK (Intel® Movidius™ NCSDK) enables deployment of deep neural networks on compatible devices such as the Intel® Movidius™ Neural Compute Stick. The NCSDK includes a set of software tools to compile, profile, and validate DNNs (Deep Neural Networks) as well as APIs on C/C++ and Python for application development. |

The NCSDK has two general usages: | The NCSDK has two general usages: | ||

Revision as of 06:22, 19 December 2018

Make sure you also check GstInference's companion project: R2Inference |

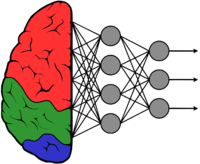

The NCSDK Intel® Movidius™ Neural Compute SDK (Intel® Movidius™ NCSDK) enables deployment of deep neural networks on compatible devices such as the Intel® Movidius™ Neural Compute Stick. The NCSDK includes a set of software tools to compile, profile, and validate DNNs (Deep Neural Networks) as well as APIs on C/C++ and Python for application development.

The NCSDK has two general usages:

- Profiling, tuning, and compiling a DNN models.

- Prototyping user applications, that run accelerated with a neural compute device hardware, using the NCAPI.

Installation

You can install the NCSDK on a system running Linux directly, downloading a Docker container, on a virtual machine or using a Python virtual environment. Al the possible installation paths are documented on the official installation guide.

Tools